- HOME

- VENUE

- RSVP

- REGISTRY

- CONTACT

- Download kung fu panda 3 full movie in english

- How to get an app to run in safe mode

- How to install openmp on linux

- Skyrim dualshock 3 pc

- Buy x plane 10

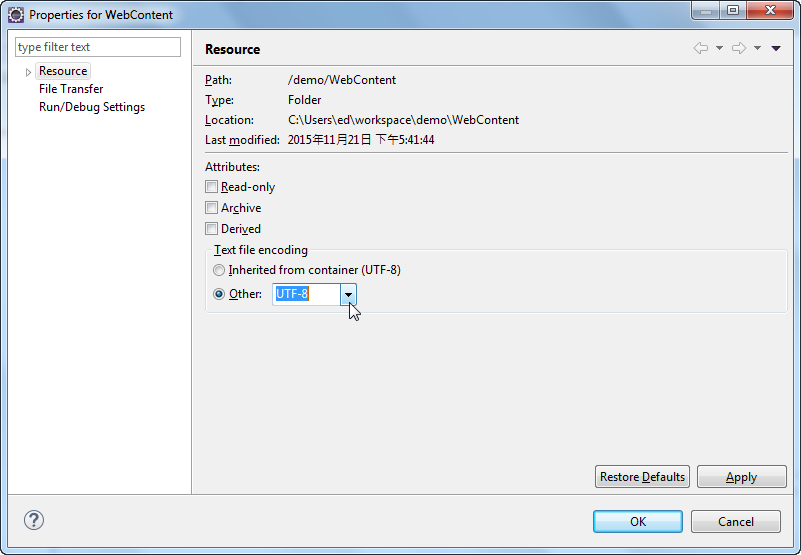

- Change text encoding

- Zelda wind waker songs wii u walkthrough dragon roost

- Programming language list 2018

- Printing coupons canon mx310 software

- Plagiarism checker online with report

- Change text encoding pdf#

- Change text encoding software#

- Change text encoding code#

- Change text encoding windows#

There should be almost no need to ever type Encoding.Default in your code: it's a badly-named property which should be ignored. StreamReader will try to detect the encoding used from the byte order marks, but will fall back to UTF-8 if that fails, so just let it do that. The easiest thing is to just do: oStreamReader = new StreamReader(_sFileName) OStreamReader = new StreamReader(_sFileName, ) But our customer request is the font encoding should not in Custom encoding, he expect font encoding other then Custom encoding.

Change text encoding pdf#

UTF-8 is often identical in the U+00 to U+7F range, but can encode characters outside the ASCII range without loss Ya exactly you correct Dude, we are using some math equation in the PDF file so only we getting this encoding. You can adjust the HTML entity number base (dec or hex) and. You can also encode all letters in text to HTML entities (not just special HTML symbols). This allows you, for example, to put HTML inside of HTML. This encoding transforms all special HTML characters into something called HTML entities.

Change text encoding code#

Because all Default encodings based on ANSI code pages lose data, consider using the Encoding.UTF8 encoding instead. HTML-encoding is also known as HTML-escaping. The active code page may be an ANSI code page, which includes the ASCII character set along with additional characters that vary by code page.

Change text encoding windows#

NET Framework on the Windows desktop, the Default property always gets the system's active code page and creates a Encoding object that corresponds to it. NET Framework, it's your configured Windows code page. You can change the value of this variable to handle the encoding format: PS D:\PowerShell-master> $OutputEncoding = ::Unicode Try to use findstr to find one of the Chinese characters, and it will not find anything: PS C:\> Get-Content c:\test.txt | findstr /c:中īut, same command works in Cmd.exe: PS C:\> cmd /c “findstr /c:中 test.txt” For example, let’s create a text file with some Chinese characters in it.

Change text encoding software#

This becomes especially important if your software supports multiple languages. This may result in cases where an program on right side of the pipeline or redirection is not able to read input data clearly. This is set to ASCII because most of the applications do not handle unicode correctly. This variable $OutputEncoding is a system generated variable and its values can be simply obtained by typing variable name in PowerShell prompt: PS D:\PowerShell-master> $OutputEncodingĮncoderFallback : ĭecoderFallback : When we pipe output data from PowerShell cmdlets into native applications, the output encoding from PowerShell cmdlets is controlled by the $OutputEncoding variable, which is by default set to ASCII. Passing output from PowerShell to Native Application This encoding format has no relation to $OutputEncoding parameter, which is discussed next. If you place this in your $PROFILE, cmdlets such as Out-File and Set-Content will use UTF-8 encoding by default. However, in PowerShell v3 or higher, you can use $PSDefaultParameterValues to change the encoding of any cmdlets and advanced functions that accept an -Encoding parameter: $PSDefaultParameterValues = '*:Encoding' = 'utf8' }

Same goes for redirection operators > and > in the PowerShell.Īs of PowerShell 5.1 (which is the latest version), there is no way to change the encoding of the output redirection operators > and > and they invariably create UTF-16 LE files with a BOM (byte-order mark). Since Out-File is again a powershell cmdlet, it passes unicode text to the file generated. So by default, when you pipe output from one cmdlet to another, it is passed as 16-bit unicode or utf-16. The Strings inside PowerShell are 16-bit Unicode, instances of. Passing output between PowerShell cmdlets This is a rarely understood feature unless you are trying to write some module which integrates PowerShell with another software. This blog post is to discuss output encoding format used when data is passed from one PowerShell cmdlet or to other applications.